The Visual System as Statistician

|

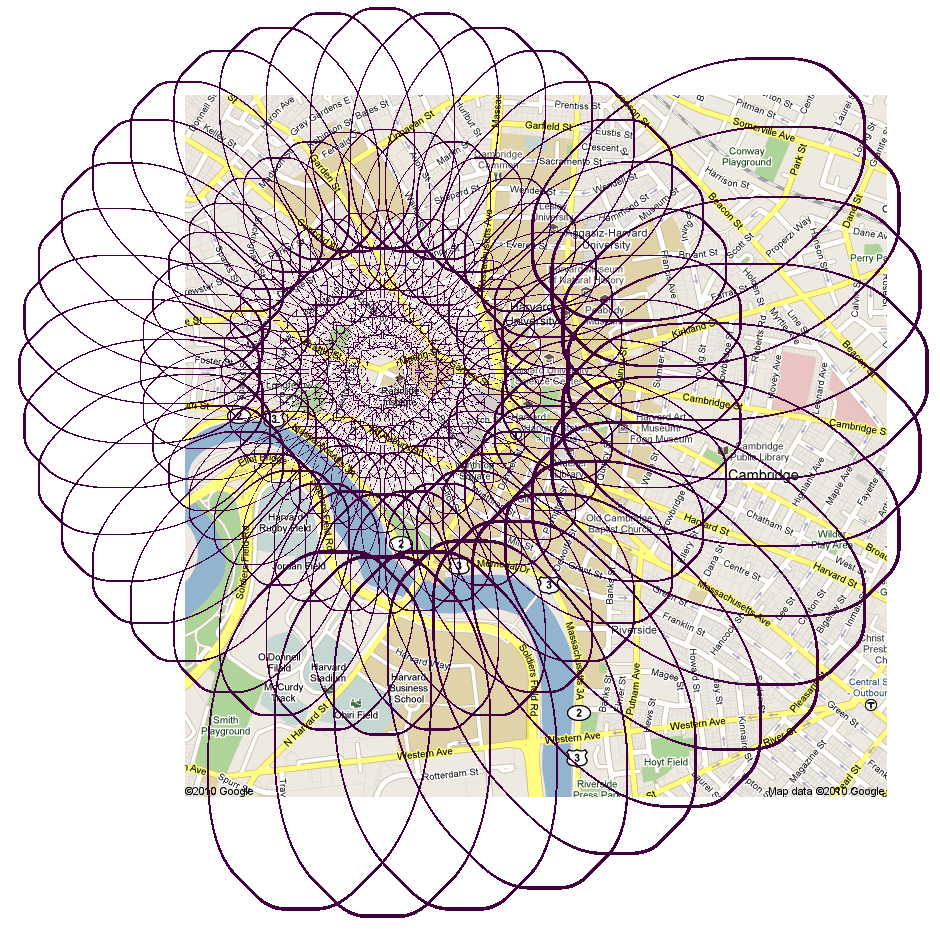

A visual system cannot process everything with full fidelity, and therefore must keep some information while losing other information. In particular, more than 99% of the visual field lies outside the fovea. It is the task of peripheral vision to condense this mass of information into a succinct but useful representation. We hypothesize that the visual system deals with limited available capacity by representing its input in terms of a rich set of local image statistics, where the local regions grow -- and the representation becomes less precise -- with distance from fixation. This representation computes sophisticated image features at the expense of spatial localization of those features.

Our "working" set of statistics consists of the joint statistics of responses of "cells" sensitive to different position, phase, orientation and scale. This representation predicts the subjective jumble of features often associated with crowding. We can characterize the information available from this statistical representation by using texture synthesis techniques from computer graphics to synthesize new samples with approximately the same statistics as the original stimulus. We call these syntheses "mongrels." Mongrels enable one to view the "equivalence classes" of our model, i.e. the sets of stimuli that lead to the same neural representation.

We have shown that the difficulty of performing an identification task with this representation of the stimuli is correlated with performance in various tasks, including visual search and object identification under crowding. This model shows promise at being able to predict a wide variety of visual phenomena, from optical illusions to scene perception.