Ruth Rosenholtz

Texture Perception

It’s Not Just for Segmentation and Shape from Texture

In the past, texture perception has largely been studied because changes in texture can signal either a boundary between objects (texture segmentation) or a change in orientation of a surface (shape from texture). While we have studied these problems, we also believe that texture perception gives us insight into a broader class of visual phenomena, including visual crowding, visual search, object recognition, and set perception.

Understanding Texture Perception is Critical for Understanding Visual Crowding, Visual Search, and Perhaps Perception in General

(More detail, and pictures, to follow shortly. Work done in conjunction with Benjamin Balas, Alvin Raj, Lisa Nakano, Livia Ilie, Ronald van den Berg, and Stephanie Chan.)

Crowding refers to visual phenomena in which identification of a target stimulus is significantly impaired by the presence of nearby stimuli, or flankers. This is particularly noticeable in peripheral vision, where even fairly distant items cause crowding.

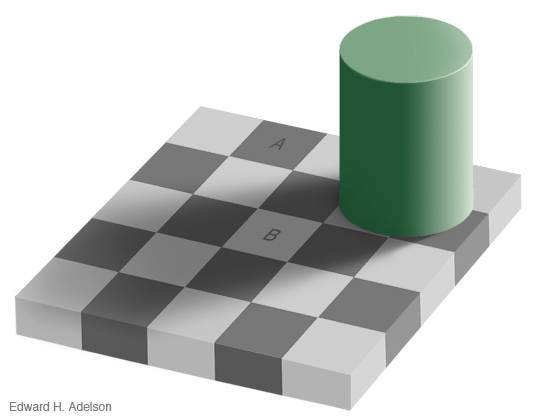

We propose that the visual system locally represents stimuli by the joint statistics of responses of cells sensitive to different position, phase, orientation and scale. This statistical, or “texture,” representation predicts the subjective “jumble” of features often associated with crowding. We show that the difficulty of performing an identification task with this representation of the stimuli is correlated with performance under conditions of crowding.

Furthermore, for a simple stimulus with no flankers, this texture representation can be adequate to specify the stimulus with some position invariance. This provides evidence for a unified neuronal mechanism for perception across a wide range of conditions including crowded perception, ordinary pattern recognition and texture perception. This suggests a reason for the odd phenomenon of crowding: perhaps it is merely the natural outcome of the tradeoff the visual system must accomplish between sensitivity to differences and tolerance to irrelevant changes such as small shifts in position.

In addition, from the statistical representation of a stimulus, one can easily create visualizations of the predicted appearance of that stimulus under crowding. We call these visualizations “mongrels,” and show that they are highly useful in the study of crowding. Mongrels enable one to view the “equivalence classes” of our model, i.e. the sets of stimuli that lead to the same neural representation.

The effectiveness of this model has implications for other aspects of visual perception. Given that the visual system perceives a jumble of features in the periphery, what does this say about visual search? For target + distractor pairings that are difficult to discriminate peripherally, an observer will have to move their eyes (or perhaps attention) to determine whether a target is present. Our mongrels allow us to make predictions about what target + distractor pairs should be difficult to discriminate. This texture representation of stimuli in the periphery (or perhaps stimuli that are merely unattended) correctly predicts the difficulty of conjunction search for a vertical white bar among horizontal white and vertical black bars. The visual system essentially “hallucinates” targets, making search difficult. This representation also correctly predicts the difficulty of search for a T among L’s, and the ease of search for many feature searches.

Furthermore, if this texture representation is all that is available in the periphery, which is used to plan future eye movements, then perhaps the top-down information we can use to guide search for a target is limited by a texture representation of the target. Early results show that this is predictive of search difficulty in real world scenes.

Set Perception

(Work done in conjunction with George Alvarez.)

The visual system accurately estimates simple set statistics, such as average size, given only brief displays (Ariely, 2001; Chong & Treisman, 2003, 2005a, 2005b). Is the accuracy surprising, given feature estimates for individual items? Are all items in a display used, or just a subset? Does the visual system compute set statistics simply to judge the sample mean in a display? Or to make decisions about whether different image regions come from different processes, as suggested by work in visual search and texture segmentation (outlier items “pop out”, and significantly different regions segment)?

We show that performance is qualitatively different than expected from a model in which the visual system computes the average of all display items. It is well modeled by simple averaging models that sample only a subset of the display and/or models that otherwise capture the uncertainty in population mean when presented with more variable samples.

We demonstrate that modeling is critical for understanding set perception. To aid predictions and experimental design to further explore set perception, we make modeling code available at http://dspace.mit.edu/handle/1721.1/7508

Texture Segmentation

Is the human visual system designed to compute statistical tests like t-tests? Experiments and modeling in our lab show that this is a good model of a range of “pre-attentive” texture segmentation results. In this model, the visual system first extracts the equivalent of mean and variance of various features like orientation on each side of a candidate texture boundary. The variance includes internal noise in the feature estimates, which may depend upon the viewing time as well as the expertise of the observer. The size of the area over which these statistics are computed seems more inherent to the system, as we have found it varies little between observers. The observer then detects a texture boundary if the equivalent of a t-test reveals a “significant” difference between the samples on the two sides of the boundary.

Bibliography

- C. H. Attar, K. Hamburger, R. Rosenholtz, H. Gotzl, & L. Spillman, “Uniform versus random orientation in fading and filling-in” Vision research, 47(24), 3041-3051, 2007.

- R. Rosenholtz, “Significantly different textures: A computational model of pre-attentive texture segmentation.” Proc. European Conference on Computer Vision, D. Vernon (Ed.), Springer Verlag, LNCS 1843, Dublin, Ireland, pp. 197-211, June 2000.

Shape-From-Texture

An image of a regularly textured surface will contain texture elements of irregular size and shape. Texture that is farther away will appear smaller and more dense, and the shape of the texture elements will vary with the orientation and curvature of the textured surface. We model this change in texture appearance, locally, by an affine transformation, and relate the affine transformations between a patch and its neighbors to the orientation and curvature of the local surface.

Shape from texture, then, is not so different from structure from motion or from binocular stereopsis. In motion two frames give slightly different views of the same surface, allowing estimation of shape. In stereopsis, it is the two eyes that give the two different views. In shape from texture, the statistical homogeneity of the texture allows us to get two views of the same texture with a single image, thus estimating the shape. With this view, techniques for dealing with multiple motions in a single image can also deal with multiple textures, e.g. a view of grass through the slats of a fence.

Bibliography

- J. Malik & R. Rosenholtz, “Computing local surface orientation and shape from texture for curved surfaces.” International Journal of Computer Vision 23(2):149-168, 1997.

- R. Rosenholtz & J. Malik, “Surface orientation from texture: Isotropy or homogeneity (or both)?” Vision Research 37(16):2283-2293, 1997.

- M. Black & R. Rosenholtz, “Robust estimation of multiple surface shapes from occluded textures.” In Proc. IEEE International Symposium on Computer Vision, (Coral Gables, FL), November 1995.